Free Online

vLLM Courses and Certifications

Optimize large language model inference with vLLM's PagedAttention and GPU acceleration techniques for production deployments. Learn deployment strategies, quantization methods, and Kubernetes integration through practical tutorials on YouTube, covering cost optimization and multi-GPU scaling for enterprise LLM serving.

Showing 151 courses

Filter by

Filters

-

-

Level

-

Duration

-

Language

-

-

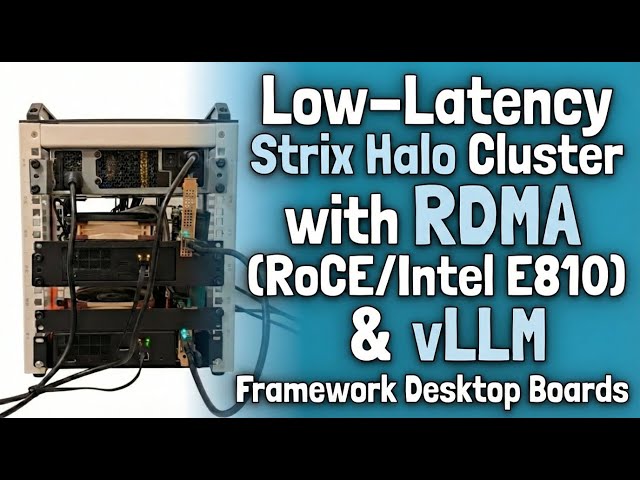

- YouTube

- 32 minutes

- Self-Paced

- Free Video

-

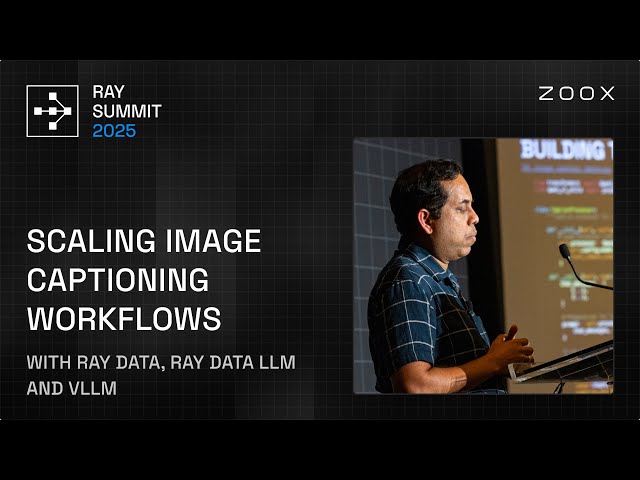

- YouTube

- 16 minutes

- Self-Paced

- Free Video

-

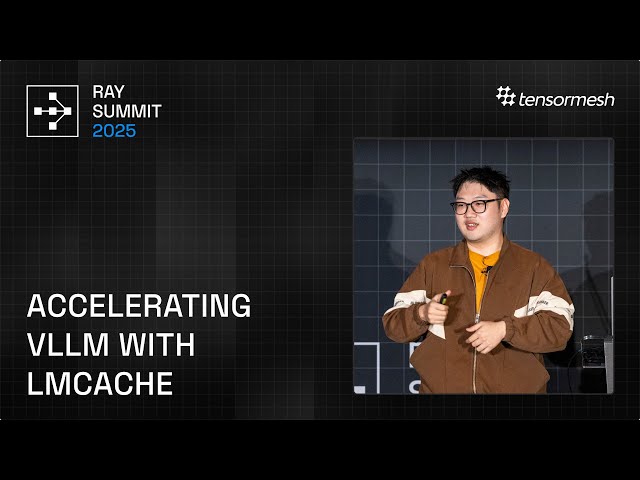

- YouTube

- 28 minutes

- Self-Paced

- Free Video

-

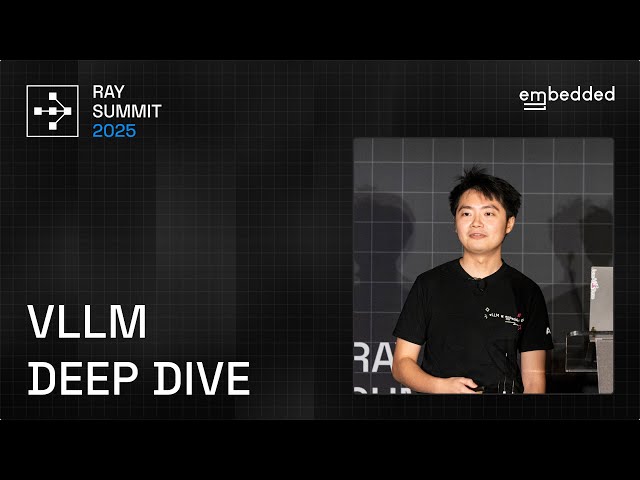

- YouTube

- 36 minutes

- Self-Paced

- Free Video

-

- YouTube

- 51 minutes

- Self-Paced

- Free Video

-

- YouTube

- 31 minutes

- Self-Paced

- Free Video

-

- YouTube

- 31 minutes

- Self-Paced

- Free Video

-

-

- YouTube

- 35 minutes

- Self-Paced

- Free Video

-

- YouTube

- 37 minutes

- Self-Paced

- Free Video

-

- YouTube

- 32 minutes

- Self-Paced

- Free Video

-

- YouTube

- 33 minutes

- Self-Paced

- Free Video

-

- YouTube

- 26 minutes

- Self-Paced

- Free Video

-

- YouTube

- 34 minutes

- Self-Paced

- Free Video

-

- YouTube

- 31 minutes

- Self-Paced

- Free Video

-

- YouTube

- 13 minutes

- Self-Paced

- Free Video

-