Free Online

Oxen Courses

Showing 44 courses

Filter by

Filters

-

Level

-

Duration

-

Subject

-

Language

-

-

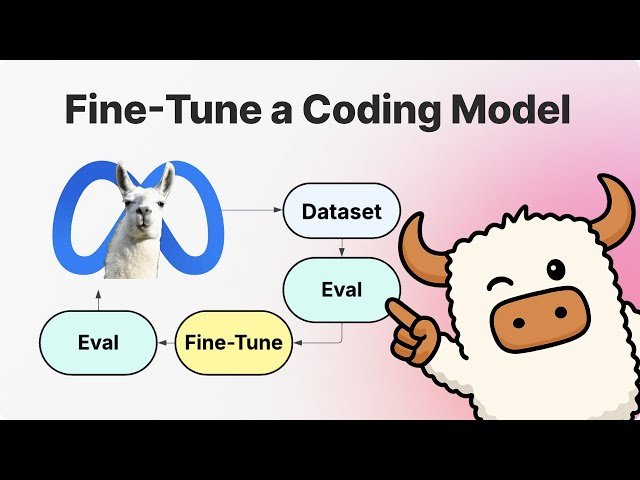

- YouTube

- 1 hour 11 minutes

- Self-Paced

- Free Video

-

- YouTube

- 37 minutes

- Self-Paced

- Free Video

-

- YouTube

- 45 minutes

- Self-Paced

- Free Video

-

- YouTube

- 55 minutes

- Self-Paced

- Free Video

-

- YouTube

- 35 minutes

- Self-Paced

- Free Video

-

- YouTube

- 43 minutes

- Self-Paced

- Free Video

-

- YouTube

- 59 minutes

- Self-Paced

- Free Video

-

-

- YouTube

- 54 minutes

- Self-Paced

- Free Video

-

- YouTube

- 51 minutes

- Self-Paced

- Free Video

-

- YouTube

- 45 minutes

- Self-Paced

- Free Video

-

- YouTube

- 36 minutes

- Self-Paced

- Free Video

-

- YouTube

- 57 minutes

- Self-Paced

- Free Video

-

- YouTube

- 33 minutes

- Self-Paced

- Free Video

-

- YouTube

- 52 minutes

- Self-Paced

- Free Video

-

- YouTube

- 22 minutes

- Self-Paced

- Free Video

-