Free Online

Neural Magic Courses

Showing 11 courses

Filter by

Filters

-

Level

-

Duration

-

Subject

-

Language

-

-

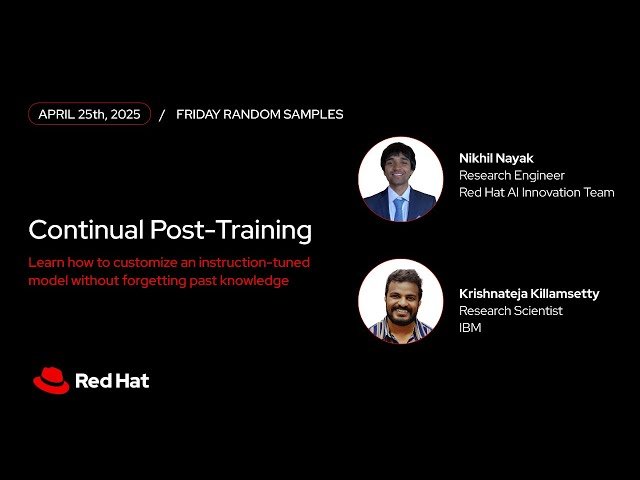

- YouTube

- 33 minutes

- Self-Paced

- Free Video

-

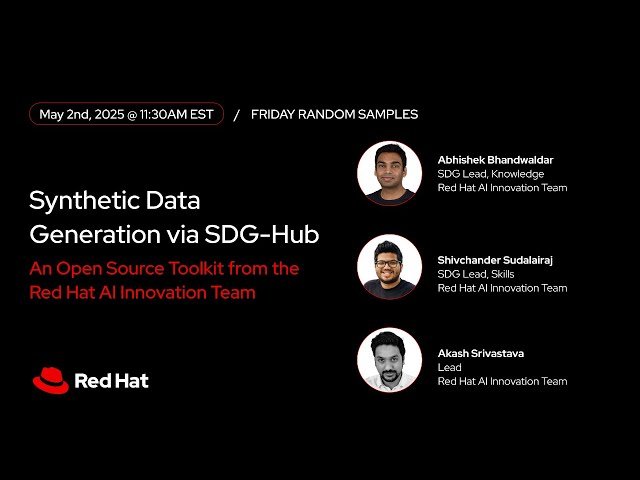

- YouTube

- 48 minutes

- Self-Paced

- Free Video

-

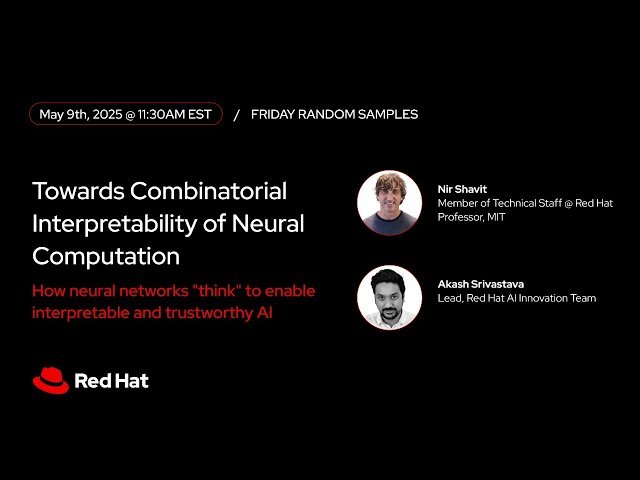

- YouTube

- 42 minutes

- Self-Paced

- Free Video

-

- YouTube

- 53 minutes

- Self-Paced

- Free Video

-

- YouTube

- 42 minutes

- Self-Paced

- Free Video

-

- YouTube

- 42 minutes

- Self-Paced

- Free Video

-

- YouTube

- 50 minutes

- Self-Paced

- Free Video

-

-

- YouTube

- 55 minutes

- Self-Paced

- Free Video

-

- YouTube

- 1 hour 3 minutes

- Self-Paced

- Free Video

-

- YouTube

- 1 hour

- Self-Paced

- Free Video

-

- YouTube

- 1 hour 1 minute

- Self-Paced

- Free Video

-