Completed

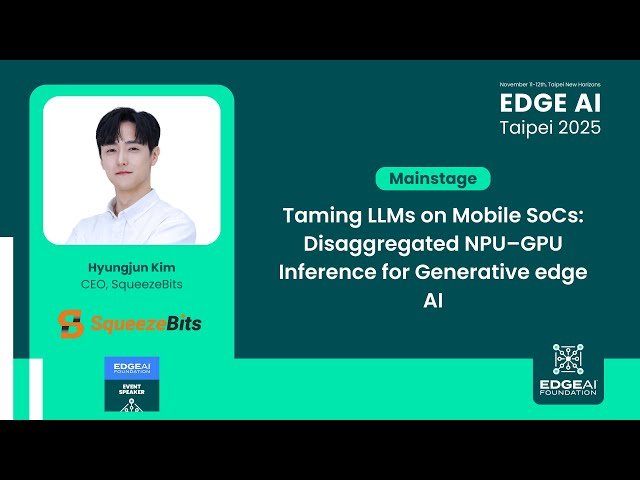

Taming LLMs on Mobile SoCs: Disaggregated NPU–GPU Inference for Generative edge AI

Class Central Classrooms beta

YouTube videos curated by Class Central.

Classroom Contents

Taming LLMs on Mobile SoCs - Disaggregated NPU-GPU Inference for Generative Edge AI

Automatically move to the next video in the Classroom when playback concludes