Completed

SNIA SDC 2025 - KV-Cache Storage Offloading for Efficient Inference in LLMs

Class Central Classrooms beta

YouTube videos curated by Class Central.

Classroom Contents

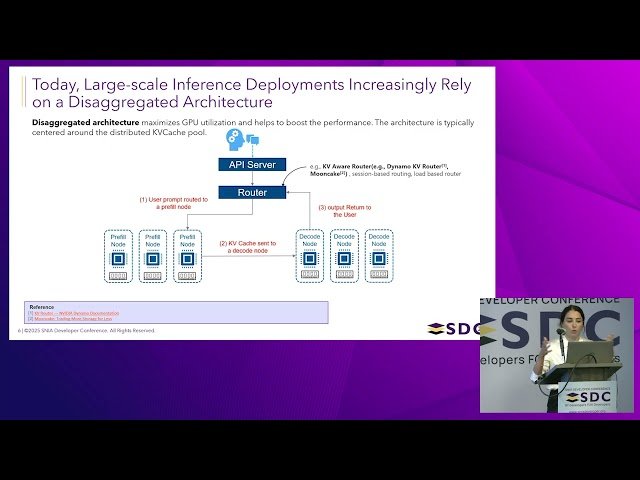

KV-Cache Storage Offloading for Efficient Inference in LLMs

Automatically move to the next video in the Classroom when playback concludes