Completed

31:37 - Engineering Considerations

Class Central Classrooms beta

YouTube videos curated by Class Central.

Classroom Contents

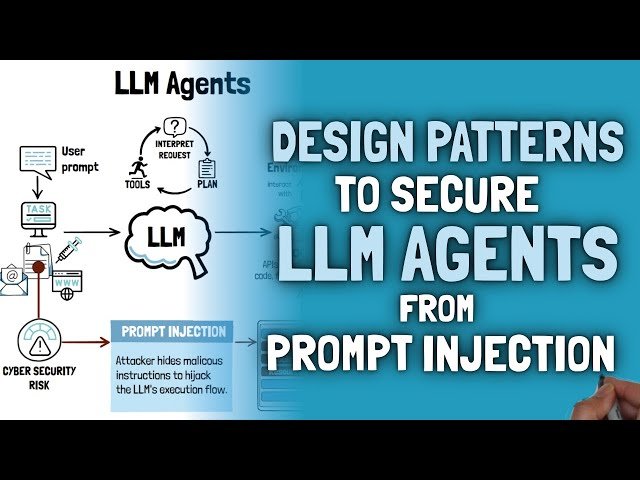

Design Patterns for Securing LLM Agents Against Prompt Injection - Paper Review

Automatically move to the next video in the Classroom when playback concludes

- 1 00:00 - Introduction

- 2 03:14 - Problem Space

- 3 05:27 - Prompt Injection Defences

- 4 08:11 - The Problem with Prompt Injection Defences

- 5 09:03 - Core Principle

- 6 09:48 - Pattern 1: Agent Selector

- 7 12:28 - Pattern 2: Plan-Then-Execute

- 8 15:03 - Pattern 3: LLM Map-Reduce

- 9 17:05 - Pattern 4: Dual LLM

- 10 20:27 - Pattern 5: Code-Then-Execute

- 11 24:40 - Pattern 6: Context Minimization

- 12 26:22 - Case Studies

- 13 27:08 - Best Practices for Securing LLM Agents

- 14 31:37 - Engineering Considerations

- 15 32:27 - Summary