Completed

00:00 - Introduction to AMD "Strix Halo" Ryzen AI MAX 395

Class Central Classrooms beta

YouTube videos curated by Class Central.

Classroom Contents

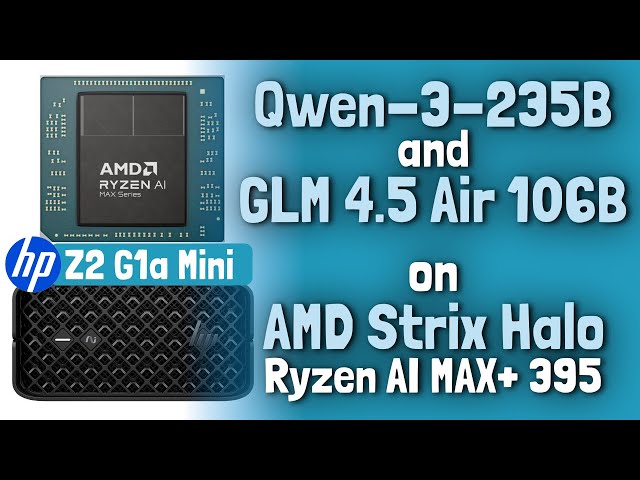

Running Large Language Models on AMD Strix Halo AI Ryzen MAX+ 395 - GLM 4.5-Air-106B and Qwen3-235B Tutorial

Automatically move to the next video in the Classroom when playback concludes

- 1 00:00 - Introduction to AMD "Strix Halo" Ryzen AI MAX 395

- 2 01:39 - TL;DR

- 3 04:39 - Running LLMs Locally

- 4 06:46 - AMD "Strix Halo" Mini PCs

- 5 09:36 - HP Z2 G1a Mini Workstation

- 6 11:59 - My Setup Memory + Llama.cpp Builds

- 7 14:00 - Vulkan AMDVLK/RADV/ROCm

- 8 15:33 - AMD ROCm

- 9 17:08 - Fedora-Based Toolboxes

- 10 17:32 - Benchmark Results

- 11 20:50 - Memory Requirements Context Size

- 12 23:57 - Credits

- 13 24:58 - Conclusion