Completed

Lecture 22 - FuseCap: Leveraging Large Language Models for Enriched Fused Image Captions

Class Central Classrooms beta

YouTube videos curated by Class Central.

Classroom Contents

CAP6412 Advanced Computer Vision - Spring 2024

Automatically move to the next video in the Classroom when playback concludes

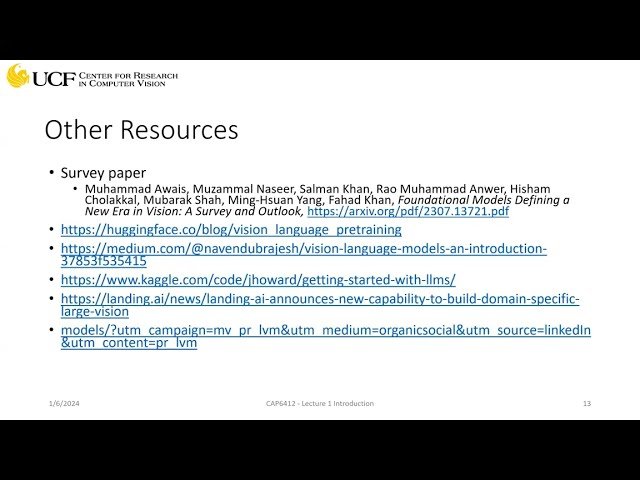

- 1 Lecture 1 - Introduction

- 2 Lecture 2 - Transformers Introduction

- 3 Lecture 3 - CLIP

- 4 Lecture 4 - Visual-Language Models Introduction Part-I: CoCA, PALI

- 5 Lecture 5 - Visual-Language Models Introduction Part-II: FLAMINGO, FLAVA, PAINTER, BLIP-2

- 6 Lecture 6 - Visual-Language Models Introduction Part-III: Image-Bind, Language-Bind, LLaVA

- 7 Lecture 7 - Visual-Language Models Introduction Part-IV: Video ChatGPT, PG-Video LLaVA

- 8 Lecture 8 - FILIP: Fine-grained Interactive Language-Image Pre-Training

- 9 Lecture 9 - HiCLIP: Contrastive Language-Image Pretraining with Hierarchy-aware Attention

- 10 Lecture 10-BLIP:Bootstrapping Language-Image Pretraining for Unified VL Understanding and Generation

- 11 Lecture 11 - BLIP-2 : Bootstrapping Language-Image Pre-training with Frozen Image Encoders and LLMs

- 12 Lecture 12 - MaMMUT: A Simple Architecture for Joint Learning for MultiModal Tasks

- 13 Lecture 13 - MERLOT RESERVE: Neural Script Knowledge through Vision and Language and Sound

- 14 Lecture 14 - Shikra: Unleashing Multimodal LLM's Referential Dialogue Magic

- 15 Lecture 15 - Video-LLaVA: Learning United Visual Representation by Alignment Before Projection

- 16 Lecture 16 - PG-Video-LLaVA: Pixel Grounding Large Video-Language Models

- 17 Lecture 17 - Evaluating Object Hallucination in Large Vision-Language Models

- 18 Lecture 18 - Eyes Wide Shut? Exploring the Visual Shortcomings of Multimodal LLMs

- 19 Lecture 19 - CM3Leon: Scaling Autoregressive Multi-Modal Models: Pretraining and Instruction Tuning

- 20 Lecture 20 - OWLv2: Scaling Open-Vocabulary Object Detection

- 21 Lecture 21 - Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection

- 22 Lecture 22 - FuseCap: Leveraging Large Language Models for Enriched Fused Image Captions