Completed

0:00 - Intro

Class Central Classrooms beta

YouTube videos curated by Class Central.

Classroom Contents

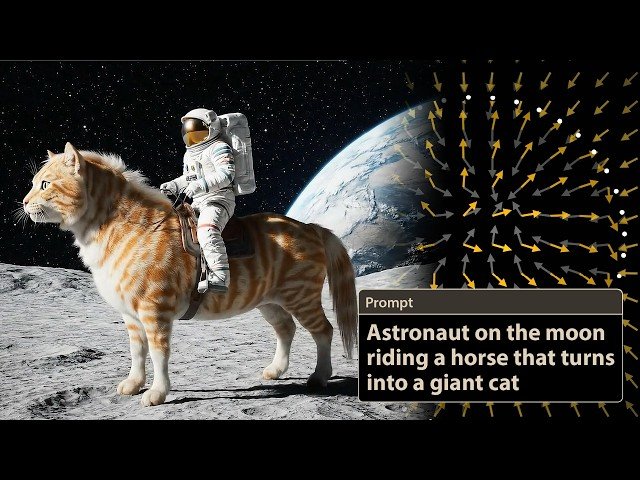

How AI Videos Actually Work - Diffusion Models, CLIP, and the Math of Turning Text into Images

Automatically move to the next video in the Classroom when playback concludes

- 1 0:00 - Intro

- 2 3:37 - CLIP

- 3 6:25 - Shared Embedding Space

- 4 8:16 - Diffusion Models & DDPM

- 5 11:44 - Learning Vector Fields

- 6 22:00 - DDIM

- 7 25:25 Dall E 2

- 8 26:37 - Conditioning

- 9 30:02 - Guidance

- 10 33:39 - Negative Prompts

- 11 34:27 - Outro

- 12 35:32 - About guest videos + Grant’s Reaction

- 13 6:15 CLIP: Although directly minimizing cosine similarity would push our vectors 180 degrees apart on a single batch, overall in practice, we need CLIP to maximize the uniformity of concepts over the…

- 14 Per Chenyang Yuan: at 10:15, the blurry image that results when removing random noise in DDPM is probably due to a mismatch in noise levels when calling the denoiser. When the denoiser is called on x…

- 15 For the vectors at 31:40 - Some implementations use fx, t, cat + alphafx, t, cat - fx, t, and some that do fx, t + alphafx, t, cat - fx, t, where an alpha value of 1 corresponds to no guidance. I cho…

- 16 At 30:30, the unconditional t=1 vector field looks a bit different from what it did at the 17:15 mark. This is the result of different models trained for different parts of the video, and likely a re…